Goodness of fit

Outline

Topics

- General notion of goodness-of-fit checks.

- Specific example for Bayesian models: posterior predictive checks.

- Limitations.

Rationale

Is your statistical model missing some critical aspect of the data? We have approached this question in a qualitative way earlier in the course. Today, we provide a more quantitative approach. In practice, both qualitative and quantitative model criticism are essential ingredients of an effective Bayesian data analysis.

What is goodness-of-fit?

- Goodness-of-fit: a procedure to assess if a model is good (approximately well-specified) or bad (grossly mis-specified).

- Applies to both Bayesian and non-Bayesian models, obviously we focus on the former today.

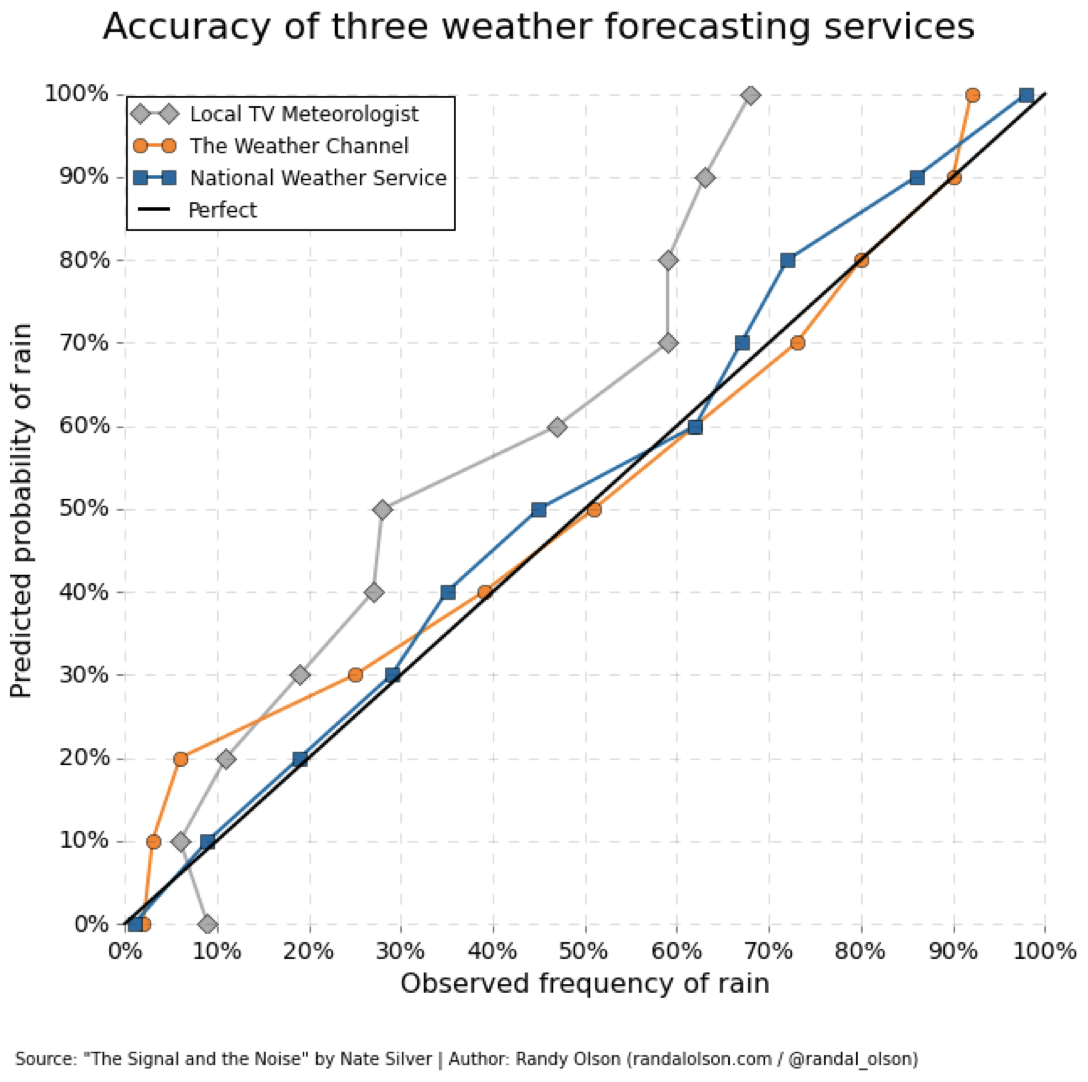

Review: calibration

To understand today’s material we need to review the notion of calibration of credible intervals.

Question: for well-specified models, credible intervals are…

- calibrated for small data, calibrated for large data

- not calibrated for small data, calibrated for large data

- only approximately calibrated for both small and large data

- none of the above

Recall: in a Bayesian well-specified context, calibration holds for all dataset sizes!

This suggests calibration could be useful to detect mis-specification…

From calibration to goodness-of-fit

Question: Can we do goodness-of-fit check on a latent variables \(X\)?

No: in a real data analysis scenario, \(x\) is unknown, so we cannot check if \(x\) is contained in a corresponding credible interval.

Suggestions?

Review: prediction

- Recall that Bayesian models can be used to predict the next observation, \(y_{n+1}\).

- We did this…

- mathematically,

- in simPPLe,

- in the first quiz,

- and in this week’s exercise you will do it in Stan using generated quantities.

Question: Can we do goodness-of-fit check on a prediction?

- Yes

- Yes, but only for discrete variables

- Yes, but only for continuous variables

- No

Yes: using a universally applicable leave-one-out technique:

- instead of giving all \(n\) data points to Stan, give only the first \(n-1\) data points,

- leave data point \(n\) out (hence the name).

- Compute, say a \(99\%\) credible interval \(C\) predicting the \(n\)-th observation based on data \(y_1, \dots, y_{n-1}\).

- If \(y_n \notin C\): output a warning.

Posterior predictive check

- Let \(C(y)\) denote a 99% credible interval computed from data \(y\).

- Let \(y_{\backslash n}\) denote the data excluding point \(n\).

- Output a warning if \(y_n \notin C(y_{\backslash n})\).

Proposition: if the model is well-specified, \[\mathbb{P}(Y_n \in C(Y_{\backslash n})) = 99\%.\]

Proof: special case of our generic result on calibration of credible intervals..

Question: what are potential cause(s) of a posterior predictive “warning”, (i.e., \(y_n \notin C(y_{\backslash n})\)):

- Model mis-specification.

- Posterior is not approximately normal.

- MCMC too slow and/or not enough samples.

- Bad luck.

- Software defect.

- a, b

- a, b, c

- a, c, e

- a, c, d, e

- None of the above

- Model mis-specification.

- Yes, as the name of this page suggests!

- Posterior is not approximately normal.

- No, that’s irrelevant to the present situation.

- MCMC too slow and/or not enough samples.

- Yes, and we will talk more about it this week.

- Bad luck.

- Yes, even in the well-specified case, there is a (100-99)% chance that the warning is issued (related to so-called “type I error” in frequentist statistics).

- Software defect.

- Yes, and we will talk more about it this week.

- So the correct answer is

a, c, d, e. - As you can see, there are many other choices (

c, d, e) on top of the one we are interested in (a)- …which complicates the interpretation of posterior predictive checks.

- We will see next some strategies to address

cande.