suppressPackageStartupMessages(library(cmdstanr))Effective sample size

Outline

Topics

- Monte Carlo standard error (MCSE)

- Effective sample size (ESS)

- Mathematical underpinning: central limit theorem for Markov chains

Rationale

After running an MCMC chain and convincing yourself the chain is mixing well, the next question is: how many digits are reliable when reporting an MCMC approximation (e.g., a posterior mean)?

To answer this question, we will encounter an MCMC version of the notion of effective sample size. This is distinct from SNIS’s ESS but has the same underlying intuition.

Context

Two types of errors involved in Bayesian analysis based on Monte Carlo (see also: the two kinds of asymptotics):

- Statistical error: inherent uncertainty due to e.g., the fact that we have finite data.

- Computational error: additional error due to the fact that we use an approximation of the posterior instead of the exact posterior.

- This page focuses on the second type of error.

- Full picture should include both (i.e., the first step of this joke can be taken seriously).

- Interestingly, the mathematical toolbox to study 2 is similar to the non-Bayesian toolbox (i.e. normal approximation) for studying 1.

Bayesian statisticians using central limit theorems?

Earlier on, when building credible interval, (a Bayesian measure of statistical error) we avoided central limit theorems.

Question: why are Bayesians OK with using central limit theorems for MCMC error analysis (i.e., for computational error)?

- Actually, Bayesians sometimes use CLTs for building credible intervals.

- Because it is often harder to increase the number of MCMC iterations \(M\) compared to the number of data points.

- Because it is often easier to increase the number of MCMC iterations \(M\) compared to the number of data points.

- MCMC error analysis is less important.

- Bayesians are not completely happy with CLT-based MCMC error analysis.

Best answer is: #3.

In fact, in some situation it may be impossible to increase the number of data points. For example, in the Ariane 1 success rate analysis we will never get more datapoints since this type of rocket is discontinued.

Answer #1 is also acceptable: some approaches use the Laplace approximation instead of MCMC, which is motivated by the central limit theorem (more precisely, the Bernstein-von Mises theorem).

Answer #5 is also acceptable: there is indeed ongoing research on so called “non-asymptotic” approaches to bound the error of MCMC algorithms. See for example Latuszynski et al., 2013 and Paulin, 2015.

Executive version

How many digits are reliable?

Suppose you are approximating a posterior mean in Stan. We now show how to determine how many digits are reliable:

- print the

fitobject, - roughly twice1 the column

mcse_meanprovides the radius of a 95% confidence interval.

Example: our simple doomsday model…

doomsday.stan

data {

real y;

}

parameters {

real<lower=0, upper=5> x;

}

model {

x ~ uniform(0, 5);

y ~ uniform(0, x);

}

mod = cmdstan_model("doomsday.stan")

fit = mod$sample(

data = list(y = 0.06),

output_dir = "stan_out",

chains = 1,

seed = 1,

refresh = 0,

iter_sampling = 20000

)fit$summary(NULL, c("mean", "sd", "mcse_mean", "ess_bulk"))# A tibble: 2 × 5

variable mean sd mcse_mean ess_bulk

<chr> <dbl> <dbl> <dbl> <dbl>

1 lp__ -0.365 0.640 0.0105 3716.

2 x 1.11 1.25 0.0198 3716.Question: construct a 95% confidence interval for the posterior mean of \(X\). Is the true value contained in the interval?

- \([1.11 \pm 0.01]\), and the true value is contained in the interval

- \([1.11 \pm 0.01]\), and the true value is not contained in the interval

- \([1.11 \pm 0.04]\), and the true value is contained in the interval

- \([1.11 \pm 0.04]\), and the true value is not contained in the interval

- None of the above

In the above: \([x \pm r]\) denotes the confidence interval \([x - r, x + r]\)

Reading off this table we have:

- Estimate is

1.11, - twice the standard error gives

0.04(from the columnmcse_mean, which stands for “Monte Carlo standard error for the posterior mean”).

From previous calculations, we know the true answer is \(\approx 1.117\), hence the true value is contained in the interval, as expected for approximately2 95% of the random seeds passed to Stan.

Mathematical underpinnings

We answer two questions:

- How can we compute Monte Carlo Standard Errors (MCSE) (i.e., numbers such as in

mcse_mean)? - What underlying theory justifies that computation?

Along the way we define the notion of Effective Sample Size (ESS) for MCMC.

Background

- Recall the central limit theorem (CLT) for independent and identically distributed (i.i.d.) random variables:

- if some random variables \(V_i\) are i.i.d. and

- each has finite variance, then we have3 \[\sqrt{n}(\bar V - \mu) \to \mathcal{N}(0, \operatorname{SD}[V]), \tag{1}\] where \(\bar V = \frac{1}{n} \sum_{i=1}^n V_i\) and \(\mu = \mathbb{E}[V]\).

- From the central limit theorem, recall that standard frequentist arguments give: \[\mathbb{P}(\mu \in [\bar V \pm 1.96 \text{SE}]) \approx 95\%, \tag{2}\] where: the Standard Error (SE) is given by \(\text{SE} = \operatorname{SD}[V] / \sqrt{n}\).

Central limit theorem (CLT) for Markov chains

- We would like to have something like Equation 2 for our MCMC algorithm,

- however, the samples from MCMC have dependence, they are not i.i.d….

- … so we cannot use the above i.i.d. central limit theorem.

- But fortunately there is generalization of the central limit theorem that applies!

- Namely: The central limit theorem for Markov chains.

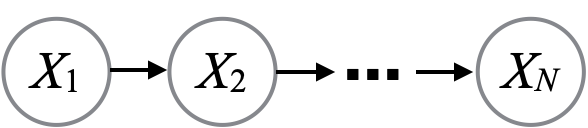

Definition: the random variables \(X^{(1)}, X^{(2)}, \dots\) are called a Markov chain if they admit the following “chain” graphical model.

- Here we state a version of the CLT for Markov chains specialized to our situation:

- Let \(X^{(1)}, X^{(2)}, \dots\) denote the states visited by an MH algorithm.

- \(\mu = \int x \pi(x) \mathrm{d}x\) is the quantity we seek to approximate,

- i.e., a posterior mean.

- Recall \(\pi(x) = \gamma(x) / Z\), where \(\gamma(x) = p(x, y)\) and \(Z = p(y)\).

- Let \(\bar X\) denote our MCMC estimator, i.e., the average of the samples.

Theorem: assuming \(\sigma^2 = \int (x - \mu)^2 \pi(x) \mathrm{d}x < \infty\) (in our context: posterior variance is finite) and under appropriate fast mixing conditions,4 \[\sqrt{n}(\bar X - \mu) \to \mathcal{N}(0, \sigma_a), \tag{3}\] where the constant \(\sigma^2_a > 0\) is known as the asymptotic variance.

Asymptotic variance

Notice: there is a difference between the limiting distributions in the i.i.d. and Markov CLTs (Equation 1 and Equation 3)!

- For i.i.d. CLT: variance of the limiting distribution is equal to the variance of \(X_1\).

- For Markov CLT: we left the variance of the limiting distribution more vague (\(\sigma_a^2\)).

Intuition: because of the dependence between MCMC iterations, the noise of the approximation can be larger compared to the i.i.d. setting.5

Effective sample size

The MCMC Effective Sample Size (ESS) is an answer to the following:

Question: How many i.i.d. samples \(n_e\) would be equivalent6 to my \(M\) samples obtained from MCMC?

Apply the following formula, \(\operatorname{Var}[a X + b] = a^2 \operatorname{Var}[X]\) to both the CLT for i.i.d. samples, and then the CLT for Markov chains.

- \[n_e = \frac{\sigma^2}{\sigma_a^2} M.\]

- \[n_e = \frac{\sigma}{\sigma_a} M.\]

- \[n_e = \frac{\sigma_a^2}{\sigma^2} M.\]

- \[n_e = \frac{\sigma_a}{\sigma} M.\]

- None of the above

CLT for Markov chains gives: \[\sqrt{M} (\bar X_\text{Markov} - \mu) \approx \sigma_a G,\] where \(G\) is standard normal.

Taking the variance on both sides: \[M \operatorname{Var}[\bar X_\text{Markov}] \approx \sigma_a^2.\]

CLT for i.i.d. gives: \[\sqrt{n_e} (\bar X_\text{iid} - \mu) \approx \sigma G\]

Taking the variance on both sides (we could actually bypass the CLT argument and show the equality below directly in the i.i.d. case): \[n_e \operatorname{Var}[\bar X_\text{iid}] \approx \sigma^2.\]

We have \(n_e\) is defined as having the property that \(\operatorname{Var}[\bar X_\text{iid}] = \operatorname{Var}[\bar X_\text{Markov}]\), so after some algebra: \[n_e = \frac{\sigma^2}{\sigma_a^2} M.\]

Estimating the asymptotic variance with many chains

- There are many ways to estimate the asymptotic variance (see references below).

- We start with the simplest possible scenario:

- suppose we have \(C\) independent chains

- each with \(S\) MCMC samples.

- Since we have \(C\) chains, we get \(C\) different Monte Carlo estimators:

- \(E_1, E_2, \dots, E_C\).

- Denote also the overall estimator by: \[E = \frac{1}{C} \sum_{c=1}^C E_c.\]

- First, by the CLT for Markov chains: for any \(c\), \[S \operatorname{Var}[E_c] \approx \sigma^2_a. \tag{4}\]

- Second, since the \(E_1, \dots, E_C\) are i.i.d., \[\operatorname{Var}[E_c] \approx \frac{1}{C} \sum_{c=1}^C (E_c - E)^2. \tag{5}\]

Question: combine Equation 4 and Equation 5 to obtain an estimator for the asymptotic variance.

- \[\sigma_a \approx C \left( \frac{1}{S} \sum_{c=1}^C (E_c - E)^2\right)\]

- \[\sigma_a \approx S \left( \frac{1}{C} \sum_{c=1}^C (E_c - E)^2\right)\]

- \[\sigma_a^2 \approx C \left( \frac{1}{S} \sum_{c=1}^C (E_c - E)^2\right)\]

- \[\sigma_a^2 \approx S \left( \frac{1}{C} \sum_{c=1}^C (E_c - E)^2\right)\]

- None of the above

Combining the two equations, we obtain:

\[\sigma_a^2 \approx S \left( \frac{1}{C} \sum_{c=1}^C (E_c - E)^2\right).\]

Estimating the asymptotic variance with one chain

- View a trace of length \(M\) as \(C\) subsequent batches of length \(S\) for \(M = C \cdot S\).

- Common choice: \(C = S = \sqrt{M}\).

- This is known as the batch mean estimator.

Additional references

- See Flegal, 2008 for a nice exposition on the technique described here for estimating the asymptotic variance, known as the batch mean estimator, as well as many other methods for asymptotic variance estimation.

- See Vats et al., 2017 for a multivariate generalization of the effective sample size.

Footnotes

Where does “twice” come from? More precisely, it is \(1.96\), which comes from the quantile function of the normal evaluated at \(100\% - 5\%/2\):

↩︎qnorm(1-0.025)[1] 1.959964It is only approximate, since as we shall see the standard error estimator uses central limit theorem approximations.↩︎

In this page, \(\to\) refers to convergence in distribution.↩︎

Several different conditions can be used to state the central limit theorem for Markov chains, see Jones, 2004 for a review. For example, Corollary 4 in that review can be used since the MH algorithm is reversible as we will see soon. Corollary 4 requires the following conditions: reversibility, a finite variance, \(\sigma^2 < \infty\) (reasonable, as the CLT for i.i.d. requires this as well), geometric ergodicity (which we discussed in a footnote of MCMC diagnostics), and Harris ergodicity, which is more of a technical condition that would be covered in a Markov chain course.↩︎

Interestingly, the noise can also be lower in certain situations! These are called super-efficient MCMC algorithms. Consider for example an MH algorithm over the state space \(\{1, 2, 3\}\) that proposes, at each iteration, uniformly over \(\{1, 2, 3\}\) while excluding its current position. It can be shown that this algorithm will have lower variance compared to the i.i.d. simple Monte Carlo algorithm.↩︎

By equivalent, we mean: “have the same variance”.↩︎