MH as a Markov chain

Outline

Topics

- Homogeneous Markov chains

- Transition kernel

- Initial distribution

- MH as a Markov chain

Rationale

Why is the MH ratio defined as \(\gamma(x')/\gamma(x)\)?

If we modified the MH ratio, would MCMC still “works”?

Recall: by “MCMC still works” we mean “MCMC is still consistent” i.e. admits a law of large numbers.

Markov chain definitions

To answer the question above:

- we need to study in more detail the law of large numbers for Markov chains.

- We will need some concepts related to Markov chains

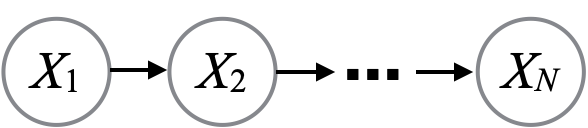

Recall: the random variables \(X^{(1)}, X^{(2)}, \dots\) are called a Markov chain if they admit the following “chain” graphical model.

Note: today we will assume the state space is discrete, we’ll relax this soon.

Definition: let \(K_m(x' | x) = \mathbb{P}(X^{(m+1)} = x' | X^{(m)}= x)\). We call \(K_m\) the transition kernel (for the \(m\)-th step).

Definition: if all the transition kernels are equal, \(K_m = K\) for some \(K\), we say the Markov chain is homogeneous.

Definition: the marginal distribution of the first state, \(X^{(1)}\) is called the initial distribution.

The MH algorithm as a Markov chain

Recall MH involves:

- a proposal \(q(x'|x)\)

- a MH ratio \(r(x, x') = \gamma(x') / \gamma(x)\), and

- an acceptance probability \(\alpha(x, x') = \min(1, r(x, x'))\).

Question: write the transition kernel \(K(x' | x)\) for the MH chain, assuming \(x \neq x'\).

- \(q(x' | x)\)

- \(q(x' | x) \alpha(x, x')\)

- \(\alpha(x, x')\)

- \(\sum_{x'} q(x' | x) (1 - \alpha(x, x'))\)

- None of the above.

The probability to move to \(x' \neq x\) requires both:

- proposing to \(x'\), with probability \(q(x'|x)\), and,

- accepting the proposal, with probability \(\alpha(x, x')\).

The correct answer is \(q(x' | x) \alpha(x, x')\).

Intuition: the “and” in the hint suggests chain rule hence multiplication.

Mathematical derivation: first denote

- the current state by \(X\)

- the proposed state by \(X^* \sim q(\cdot|X)\)

- the next state by \(X'\) (equal to either \(X^*\) if the proposal is accepted, \(X\) otherwise).

\[\begin{align*} K(x'|x) &= \mathbb{P}(X' = x' | X = x) \\ &= \sum_{x^*} \mathbb{P}(X' = x', X^* = x^* | X = x) \;\;\text{(marginalization)} \\ &= \sum_{x^*} \mathbb{P}(X^* = x^* | X = x) \mathbb{P}(X' = x' | X^* = x^*, X = x) \;\;\text{(chain rule)}\\ &= \sum_{x^*} q(x^*|x) \mathbb{P}(X' = x' | X^* = x^*, X = x). \;\;\text{(definition of proposal)} \end{align*}\]

- Since \(x \neq x'\), we can only get to \(x'\) by accepting a proposal…

- …, i.e. when \(x' = x^*\).

- So there is only one term in the sum where \(\mathbb{P}(X' = x' | X^* = x^*, X = x) > 0\)…

- …, namely when \(x' = x^*\).

\[\begin{align*} K(x'|x) &= \sum_{x^*} q(x^*|x) \mathbb{P}(X' = x' | X^* = x^*, X = x) \\ &= q(x'|x) \mathbb{P}(X' = x' | X^* = x', X = x) \;\;\text{(see expl. above)} \\ &= q(x'|x) \alpha(x, x'). \;\;\text{(definition of accept probability)} \\ \end{align*}\]

Note: the case \(x = x'\) is a bit more complicated, because there are two situations where MH stays at the same state, namely,

- the proposal tells us to stay at the current state (possible with discrete proposals only) and we accept that proposal, or,

- the proposal tries to go somewhere (including \(x\)) but the proposal is rejected.

Notice that the two situations are disjoint, so using the additivity axiom:

\[K(x | x) = q(x|x) \alpha(x, x) + \sum_{x'} q(x'|x) ( 1- \alpha(x, x')).\]

The above equation might be impossible to numerically compute in certain situations. Fortunately, we do not need to compute it to run MCMC, we only need to simulate from \(K(\cdot | x)\). Why do we spend time writing expressions for \(K(x'|x)\) then? In order to do mathematical analysis, which gives us useful guarantees, such as law of large numbers and central limit theorems.